Advertising, social media marketing, and SEO techniques are constantly improving. New tools are emerging for marketers, product managers, and SMBs, including those using machine learning and artificial intelligence.

Today, to avoid being among those who lost their businesses, it’s imperative to explore innovations and implement new MarTech tools to automate and streamline marketing processes. Google is already using ML-based ranking algorithms. The output is becoming more and more “SMART.”

If search engines “learned” to analyze large amounts of data to provide people with the information they might be interested in, marketers and SEO specialists also need to learn to show that their websites are the most relevant to those interests.

On the other hand, modern marketing dramatically depends on analyzing large amounts of data. And it’s no longer enough just to collect statistics and analyze simple quantitative reports. One can no longer cope with a comprehensive analysis of diverse information. This is why tools based on machine learning are also increasingly being introduced in the marketing sector.

Such tools can be especially useful if you continuously analyze incoming information to improve the following:

- Traffic

- Search results

- Conversion rates

- Other critical indicators in real time

SEO specialists, web/target marketers, and many other experts involved in promotion and sales are in massive need of tools based on extensive data analysis, application of machine learning, and neural networks. Only this sophisticated analysis can keep performance high in today’s highly dynamic marketplace.

Such tools already exist, and some are offered by Google (e.g. ML-based keyword analysis tools). Other systems are developed by independent experts, some of which are becoming extremely popular in the professional environment.

Let’s understand the main methods of using machine learning in SEO and how they are used today.

1. Support Vector Machines

Classification is a process that significantly facilitates segmentation. In other words, support-vector machines (SVM) are a set of prediction algorithms that classify customer information, making intelligent segmentation possible. The list of features can include a variety of parameters, such as age, purchase history, priority channels, etc.

SVM performs supervised learning for the classification or regression of data groups. In other words, SVM is a set of supervised learning methods used for:

- Classification

- Regression

- Outliers detection

For example, Mailchimp, a popular customer relationship management (CMR) tool, operates based on such algorithms. It uses an SVM algorithm to predict user behaviour. It allows for predicting which segments are most likely to have high customer lifetime value (LTV) and cost per acquisition (CPA).

You can order the development of a custom solution using such technologies for your team. Then, you need to contact the developers and a software testing company to get the exact result you expect.

2. Information Retrieval

Keywords, their proper selection, and their application are still relevant today. Simple solutions are often the most effective even with the most sophisticated data analysis and machine learning algorithms.

Modern algorithms for information retrieval and relevance assessment in Google and other SERPs continue to use keywords in the analysis process. They are used to assess the relevance of content to user queries. Analysis algorithms are robust and accurate, with search engines constantly “learning.” Therefore, it’s essential to use modern tools when analyzing keywords.

For example, SE Ranking is a software system popular among SEO specialists, which helps marketers optimize a website focusing on a good positioning in SERPs.

In its turn, Elasticsearch is a scalable full-text search and analytics utility that lets you quickly store, search, and analyze large amounts of data in real-time. Such a tool allows marketers to get a complete list of keywords, which is based on the analysis of user input.

The algorithm process consists of 4 steps:

- Get the user’s search query

- Break down the query into keywords

- Find out the list of relevant documents

- Use the relevance indicator to rank each document

In the last step, the system takes the sum of the different criteria:

- Keyword frequency (what percentage, i.e. the number of times the word is present in the document)

- Inverse frequency (if the percentage of occurrence of a keyword is too high, the document is ranked lower)

- Coordination (how many words from the user’s original query are present in the document)

Next, an evaluation is performed, which is used to pre-select and rank all documents.

That’s why SEO specialists need to choose keywords intelligently and use them carefully on website pages. This is where keyword analysis, available in Google’s list of features for the webmaster or similar tools, can help.

3. K-Nearest Neighbors Algorithm

The K-Nearest Neighbors (“k-NN” or “KNN”) algorithm is one of the simplest non-parametric supervised learning methods. New data in k-NN is classified based on how similar it is to existing data. That’s why it’s also called the “lazy learning algorithm.”

How KNN works:

- You get an image of a fruit that looks like an apple or a pear. You need to know which category to assign it to;

- The KNN model compares the characteristics of the fruit with a set of features that belong to the “pear” category and a set of features that belong to the “apple” category;

- Based on the analysis results, the system selects which category most features fit and assigns the new fruit to that category.

Usually, k-NN is used if it’s necessary to perform data classification based on predefined characteristics. For example, KNN algorithms are used in video streaming recommendation systems or “recommended products” on significant marketplaces. They “evaluate” what you’re interested in, compare the features of the video or product to the available categories, and provide recommendations on most similar items. This also works for other social networks. For example, to get lots of Instagram likes, you need to offer users the content they seek to get lots of Instagram likes.

4. Learning to Rank

Learning to rank (LTR) or machine-learned ranking is a class of algorithms that are used to determine the relevance of keyword-based searches. When users specify a query in the search bar, they expect the output to be filled with the documents closest to what they need. And this is where LTR helps a lot.

LTR can be divided into three methods: Pointwise, Pairwise, and Listwise:

- Each document is analyzed separately for the presence and number of keywords in the text;

- Documents are compared in pairs with the keywords and calculation of another document for a more accurate assessment. This can be compared to when you answer most questions and get a perfect score. But then you see that your neighbour managed to answer more questions. So your score is unimpressive as your neighbour is more accurate;

- Then the Listwise algorithm comes into play, which is more complex to understand. It analyzes ranking probabilities based on the relevance of search results.

5. Decision Trees

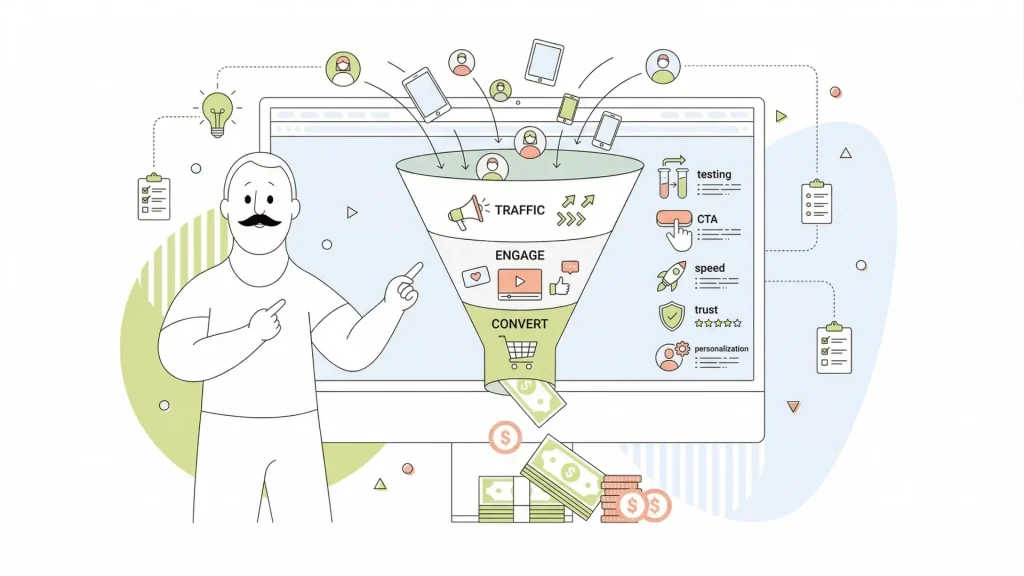

Decision trees are a method that is used in predictive modelling. If we talk about marketing, the technique is used, for example, when analyzing the buyer’s progress through the sales funnel. In this case, the analysis will be performed according to several criteria:

- Behavioural triggers – analysis of the links and fields the user clicks on and fills out;

- Attribute-based value – information about user location and other demographics;

- Numerical thresholds – how much the user spends on a purchase and how much more they will be willing to spend on a second visit.

Decision tree algorithms provide quality results and are very easy to implement. Therefore, they are used for:

- Classification and regression – constructing binary and floating values in the same model (e.g., examining gender and annual income pairs);

- Processing multiple parameters simultaneously – each “node” in the tree can represent a single parameter without overloading the entire model;

- Visual and interpretive diagnostics – analysis of patterns and relationships between values.

A decision tree will be easy to perceive with a small number of “branches.” However, the more parameters you enter for analysis, the less interpretive the tree becomes. As a result, you stop seeing the whole picture, and therefore, automated systems perform complex analysis, but marketers work with reports that offer “trees” with few “branches.”

6. K-Means Clustering Algorithms

K-means clustering algorithms are one part of unsupervised machine learning techniques. In simple terms, this type of machine learning is used to break down an array of unlabeled objects into meaningful categories.

For example, with the k-means clustering method, you can divide your customer base into smaller categories based on selected parameters like an average check, gender, age, preferred product categories, etc.

Such an approach will help create your marketing campaigns and promotions for each of your customer segments, increasing your marketing budget’s effectiveness.

What makes k-means clustering unique is the ability to predefine how many clusters (categories) you want to divide the database into.

7. Convolutional Neural Networks

Convolutional neural networks (CNNs) allow computer systems to learn about images almost the same way as people.

Of course, no computer can genuinely see an image of, say, an apple. Instead, the information system has access to numbers identifying a particular object. This process is known as object detection.

CNN allows a computer to be trained to recognize a new numerical image pattern based on an analysis of millions of other images of the same object. The larger the database of similar objects, the more accurate the identification.

Thus, if the information system has a million different images of apples in its database, thanks to CNN, it will be able to identify a new photo of an apple as “this is an apple,” i.e. the analysis is not based on sequential classification, but, as for a human, many parameters are added together simultaneously, and a conclusion is quickly made.

One of the most popular methods of using CNN is for facial recognition systems. Such networks are also used to analyze documents and handwriting, i.e. after scanning a document, it is compared with a massive amount of handwriting data, and a particular person’s handwriting is determined.

8. Naïve Bayes

The naïve Bayes (NB) algorithm is based on Bayes’ famous rule, which determines the probability of two outcomes: the likelihood of “A” for a given “B.” The algorithm is often called “naive” because it’s based on the assumption that the predictor variables are independent of each other.

This algorithm helps determine the likelihood of a successful lead magnet, ad campaign, segmentation, or keywords in information systems used by salespeople and marketers. However, the analysis requires that you know the relevant characteristics. This could be age, income level, average check, purchase history, geolocation, and other data used to segment your customer base.

To avoid going into complicated math, know that the NB algorithm answers two questions:

- Will the selected type of people behave like “X”?

- Will this type of content help get “X” results?

For Naïve Bayes to work effectively, large amounts of behavioural data will be required, including extensive use of social media, customer conversations online, etc.

Processing customer dialogues with this algorithm can help predict customer feedback on products and service levels, influence marketing trends, generate interest in the brand on social media, and predict response rates in direct marketing.

9. Principal Component Analysis

When analyzing big data, classification leads to complex segmentations. And therefore, tools will be needed to automatically find strong or weak correlations between components by plotting them on a graph and selecting a trend line.

A person can effectively analyze a graph by comparing 2-3 segments. But what if you need to study more than 30 features to research your target audience? For this purpose, principal component analysis (PCA) algorithms are used.

The PCA process, combined with big data machine learning, is a powerful tool for analyzing multidimensional sets in which a complex analysis of a significant number of segments (categories) is performed.

As a result of the algorithm, marketers or SEO experts get entire clusters correlated with each other, where the distance between the clusters suggests strong or weak relationships.

As a result, the marketer does not analyze individual features but entire clusters, which are determined by the PCA algorithm. This way, we can find the most correlated features and get the most value for the best targeting.

10. Surfer NLP: Optimize Content for SEO with Deep Learning

As another tool, we’ll review an out-of-the-box solution that uses many machine learning and big data analytics algorithms described above.

For a better understanding, since 2019, Google has started to use BERT, a form of NLP (natural language processing), to analyze and determine the relevance of documents. Thus, even if you have a high-quality list of keywords and content that corresponds to the topic, you will only achieve high results in SERPs once your text is literate, readable, and user-friendly.

Google has created a universal NLP API for SEO specialists and website owners. This add-on is designed precisely to help create quality content by revealing the text’s structure and meaning.

On the one hand, the tool is convenient and valuable. Most users note an increase in search engine rankings. On the other, context could be better, and there’s a lot of data in the results of text analysis which has no meaning when writing texts.

This is why there’s a new engine, Surfer NLP, which has more enthusiastic followers in the SEO community when compared to Google NLP.

Surfer NLP combines the capabilities of Google NLP, classic SEO tools, machine learning, and additional tools aimed at keyword analysis, relevance, and natural language.

For a non-specialist, Surfer NLP is a handy tool with a simple interface that helps improve existing content and write SEO-optimized texts from the start. The tool “evolves” and changes with similar Google tools thanks to the built-in ML tools. Therefore, quality texts written with the help of Surfer NLP, can efficiently promote your website and bring it to the top in SERPs.

How Surfer NLP works:

- A copywriter writes plain text in the content editor

- The system analyzes the content and provides recommendations

- Each type of recommendation is combined into a group, which can be opened to read more detailed tips on improving the content

As a result, writers create optimized and engaging content, reduce the number of errors, including stylistic ones, and get ideas for what else to write about. SEO specialists get quality-optimized content and a tool for quick analysis simultaneously.

Bottom Line

In fact, technology related to machine learning, despite all the amazing possibilities, has only just begun to develop. Their implementation in sales, marketing, and SEO, has already shown excellent results. However, marketers constantly need better tools for assessing consumer sentiment and behaviour.

Machine learning and neural network tools continuously analyze consumer markets, accumulate new data, and discover new insights. This information is increasingly being used by large market players, small agencies, and medium-sized businesses.

Understanding your target audience will allow you to think through your strategy better, deliver advertising, improve service quality, and increase sales. In comparison, the ability to predict the most likely reaction to specific actions of the seller will increase the profitability of investments in advertising, marketing, and SEO promotion.

Author bio:

Roy Emmerson is a technology enthusiast, a loving father of twins, a programmer in a custom software company, co-founder of TechTimes.com and marketing specialist of llc.services.